Paraf 022 & Paraph 021

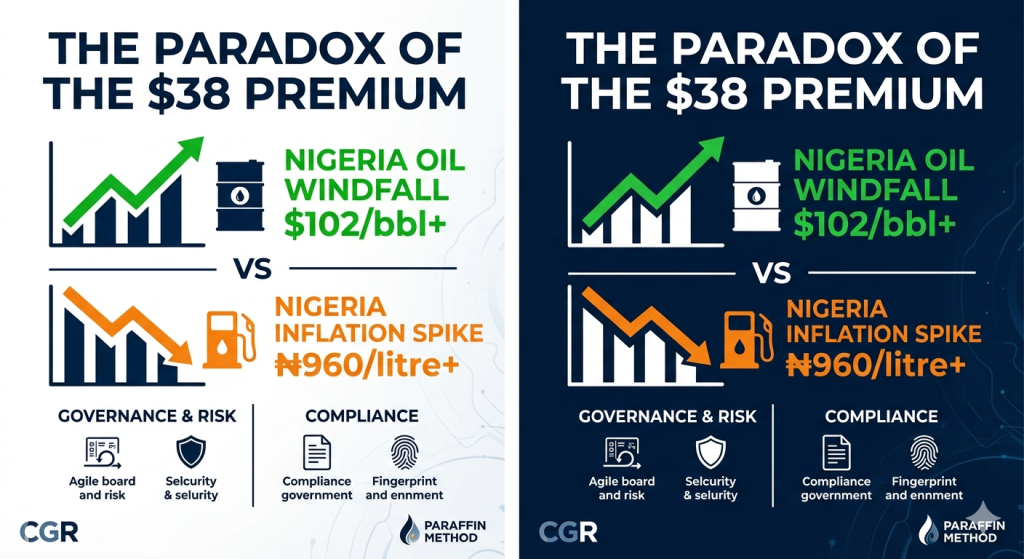

Oil prices are rising again—but this time, it’s not just energy.

It’s quietly reshaping Forex volatility, increasing ad costs, and squeezing affiliate margins across LATAM.

The real trade isn’t oil—it’s positioning before the ripple hits.

Right now the world is running on three engines:

- Geopolitics driving oil (and the supply shocks we’re seeing in the Strait of Hormuz)

- Inflation hammering economies and input costs

- AI shaping the next decade of explosive growth

If you’re like building (or scaling) a CGR automation pipeline, these are exactly the macro signals your system must prioritize.

Energy + Macro + AI = the highest-value content zones (7–10 range).

Most creators chase noise. Smart operators engineer pipelines that detect these intersections in real time, turn them into scroll-stopping content, and convert them into predictable revenue—before the rest of the market even notices the ripple.

# CGR # macro signals

This isn’t speculation. It’s signal.

The volatility is here. The ad cost spikes are coming. The margin compression is already hitting LATAM affiliates.

The only question is: are you positioned—or just reacting?

Let’s build your funnel.

Drop your comments/emails and I’ll send you the exact framework I use to turn these macro signals into automated growth engines.

#LATAM

# ForexEcosystems

https://finance.yahoo.com/video/oil-prices-still-surging-stocks-213206100.html